I may have skipped some homelab generation upgrades in my documentation here. However I have updated the page as far as I could, and I would like to provide you with a brief update on my current homelab setup with this blog post as well.

Last year, my wife and I moved into our own house. Yes, I married my love and we built a house. I didn’t shout about it because it’s something personal and I don’t have to rub it in everyone’s face. But yes, I’m a married house owner now and a loving father. Oh, I forgot to mention that my wife gave birth to a beautiful son this year. So many things happened! But anyway, back to topic.

You may have seen some images I posted on Twitter last year, about the huge IT rack I got my hands on, and the first “production” deployment in my new homelab rack. This “production” deployment was an actual beer fridge that was small enough to fit into that rack. If you don’t believe me, please go ahead and check the pictures here. The beer fridge is still there, but the huge and heavy IT rack has gone. The huge rack has been replaced by a desktop-size rack from StarTech.com. This rack is enough to provide a nice mount for my SuperMicro servers and networking equipment.

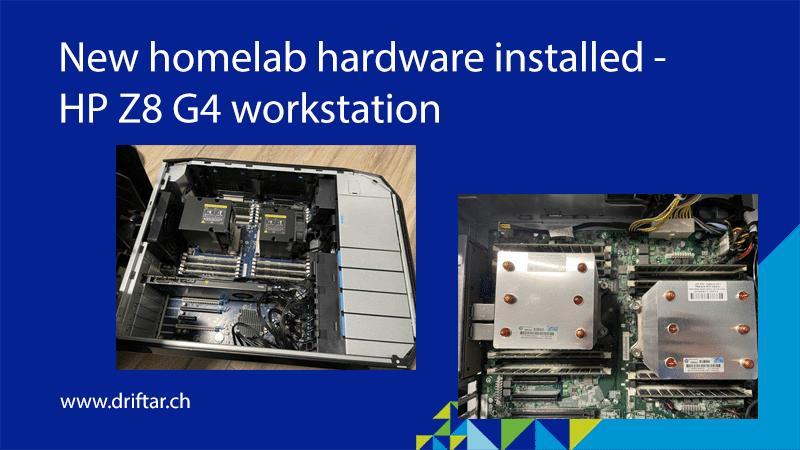

But the main topic in this blog post is the recently acquired hardware. I bought a refurbished HP Z8 G4 workstation!

What about the “why”?

Lucky me there is a dealer of refurbished hardware in the next city! No chance to find something good or reasonably priced on an auction platform in Switzerland, and not to think about searching something on eBay and literally burn your wallet for international shipping and freight costs… But why a workstation? Well, I stumbled across a Tweet from Nick Kuhn where he was showing HP workstations as his homelab hosts for his #100DaysOfHomeLab project. So I thought, why not use a workstation as an ESXi host instead of actual server hardware? It seems to be a “handy” size of hardware compared to a full 19-inch rack server. So I had some thoughts:

- It’s ok for me when the hardware (or not all of it) is NOT on the VMware HCL

- I want to get rid of the rack, so anyways no server hardware (rack rails) is possible anymore

- Should there be many physical hosts with little hardware or one large host with a lot of hardware?

I decided to go with one beefy workstation because of the available space and the things I want to run on it. This workstation is solely for the actual lab. All the homelab services, like domain controller, homelab DNS, vCenter, and some production services running for my home network, are running (and will be running) on the SuperMicro 3-node all-flash vSAN cluster. This beefy workstation will hold a bunch of nested ESXi hosts, and maybe some other VMware solutions I’m not yet sure what to go with. We will see.

That’s the BOM

I know what you’re all waiting for. The BOM – the bill of materials. The list of hardware parts and the price tag(s). Well. I can’t provide you with a huge list of every single item. But I’ll try my best to reflect on the things I bought.

The foundation of this new homelab host is an HP Z8 G4 workstation. It cost me 1790 Swiss Francs, which is at the time of writing (August 5, 2023) roughly 2040 USD. As already mentioned, it is a refurbished workstation. I bought it in the following configuration:

- 2x Intel XEON Gold 6134 @3.20 GHz (yes, a freaking dual-socket system!)

- 256 GB memory (DDR-4 2666)

- 1 TB SSD

- nVidia Quadro P4000 8 GB

My HPE ProLiant ML150 Gen9 I mentioned in the homelab generation 6b was running with 128 GB memory. Lucky me, it was also DDR-4 memory, but with only 2133 MHz. So a bit slower. Does it make a difference? Well, no, not for me and my homelab, not for the workloads I’m planning to run. So I frankensteined some hardware from that ML150 Gen9 into my new workstation ESXi host. I took the memory, as well as the Intel Optane drives with their NVMe to PCIe adapters and a dual-port 10 Gig NIC. All this hardware found its place in the Z8 G4 workstation.

As my two weeks of vacation were already over, I did not have much time to spend setting up things. I was able to frankenstein the hardware, doing some tests, and setting up a nested vSphere environment, after many tries & errors.

So more blog posts may come, stay tuned!

What 10GB network card did you use? Did the Z4 come with the power cable for the P4000?

Thanks

Hi Justin,

I’m using an Intel Ethernet Controller X540-AT2. Not sure, but I might have it frankensteined out of some other old server before I disposed of it properly.

this card is obviously old. As per Intel, it has been launched in Q1’12, and it’s discontinued now.

https://ark.intel.com/content/www/us/en/ark/products/60020/intel-ethernet-controller-x540at2.html

Best regards,

Karl

Thanks, I did end up getting a similar setup to you (z8 G4 P4000) i did encounter a couple of erros with ESXI 8 install, with secure boot turned out I det the firware error when booting ESXI , computer hangs using legacy boot evething work ok.

CXould you share what VM setting you used for thre P4000? whne connected through vsphere8 it shows I am using the vmware svga not the p4000, thanks in advance

I had to enable the GPU pass-through on the ESXi host first before I could do anything with the graphics card. Then I had to execute some esxcli commands to route that GPU through to the VM. At the end it worked, but not sure if it was the correct way.

One important thing to mention: if only one host has a GPU and your other do not, you can’t migrate the VM to another host. Therefore, I removed that GPU from my VM again. Maybe I’ll do some more testings soon.