It is some time ago when i published my last blog article. I wasn’t really in the mood for because i am learning and preparing for my VCP6-DCV. And there is a helluva stuff to stuff in my brain. This week i’m publishing some articles, beginning with this one. Not because i don’t learn for VCP, but because i’m learning right now and because i’m attending the VMware Virtual SAN Deploy and Manage course. Lets call this a recap. My brain is still collecting data and sorting it in the right shelf. This recap helps me with that. But lets start now. You probably don’t wan’t to know whats going on in my brain…

Day 1

Module 1 – Introduction to the course

To break the ice our instructor Paul McSharry started with a short introduction round for all attendees. Paul introduced himself (and his cat too…). I didn’t know him personally. I just knew that he is an expert in his area and a instructor. I heard from other people i know that Paul does his stuff very well. So i expected a good start, and finally the whole day was great.

First of all it’s now official that it’s called vSAN (with a small v in front) and not VSAN. VMware recently did a name change on this particular product. It should show that this product (vSAN) is integrated directly into the ESXi hypervisor. vSAN is a policy-driven software-defined storage tier. There are no dependencies on a VM, it runs directly in the hypervisor. We all knew that. You don’t need a special software or plugin to use vSAN, it’s just a matter of licensing. But now the name of the product makes that clear too.

When a customer wants that software defined storage is flexible, easy to use and install, quick and scalable. He doesn’t want to make compromises in performance. And it should also run in my private cloud and in my public cloud too.

Because of my customer size i don’t work often with scalability. I am somehow feeling ashamed for that. We always calculate some reserves into the systems, because we know our customer and always clarify the needs of the customer. Now i’m 100% sure what’s this with scale-out and scale-up. You scale-up when you add some disks to your hosts to increase capacity (or caching) tier in virtual SAN. You scale-out when you add one or more hosts to your (vSAN)-Cluster to increase overall performance and capacity.

Module 2 – Storage fundamentals

In the second module we talked about some basic storage stuff like spinning disks (rotating rust) and SSD’s, about IOPS and so on. We discovered some good points about latency, and why it’s good to have at least flash cache in a hybrid vSAN, or better go all-flash. It doesn’t mean that spinning disks are old school and that you shouldn’t use them. They are great in prize and capacity comparison. Think about an archive system, or a huge backup storage. For this use cases the spinning disks still deliver a fair amount of IOPS.

We took also a short review on RAID levels. It’s always good to know that, and hear it from time to time. When you’re using vSAN you don’t have to create a RAID array on your built-in storage controller. Just make the controller passing-through the disks to ESXi / vSAN and it’s all good. A discussion worth were also some storage protocols like Fiber Channel (FC), iSCSI and NFS. And last but not least the VMware HCL, the hardware compatibility list. Always check this if you build your own vSAN ready nodes, or even if you upgrade firmware (especially disk / controller firmware) on a certified vSAN ready node.

Module 3 – What is vSAN and use cases

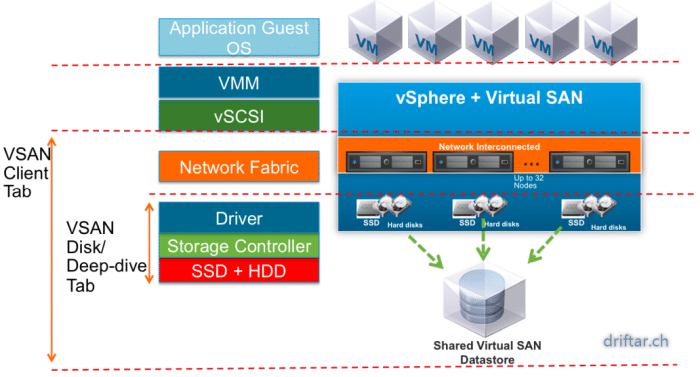

Creating vSAN is easy. You can tune, set limits etc. very similar to VMs. Every single I/O gos through the hypervisor. There is no HBA and no fabric in between host and storage. Thats a big plus regarding the storage latency, which means the latency will be decreased massively. With vSAN you use local ressources (CPU, memory and storage). If you have to expand capacity you can easily add more disks (scale-up) or add a host (scale-out). Lets take a look at the ESXi hypervisor. It already comes with HA, DRS, VDP, vSphere Replication. VMware Virtual SAN is compatible with these common features. It’s just a matter of licensing. You are using different storage tiers? You don’t have to with vSAN. It’s policy based. Limit IOPS for noisy neighbors or to guarantee an SLA.

You can build your own vSAN ready nodes (brownfield / specific pods). And you have always to check the HCL. Or you choose from preconfigured vSAN ready nodes from your favorite hardware vendor. HPE, DELL etc. will provide approved solutions. And last but not least there are the DELL EMC VxRail or even VxRack systems, preinstalled and preconfigured.

Use cases

VMware Virtual SAN is since version 6.0 now in production. One of the most uses is for virtual desktop infrastructure (VDI). Customers run also their Exchange servers, transactional data bases and so on with vSAN. There is no right or wrong. If you just wan’t to free up space in your racks and replace old hardware, then you’re good to go. With two height units you can replace four servers and a shared storage system which demand all together at least 10 units. Converged systems are a space saver. And don’t forget about energy savings.

Module 4 – Virtual SAN concepts, requirements, install ckecklist

A vSAN datastore is accessible to all hosts in the vSAN cluster, wether or not they have own local storage. You can have capacity hosts and compute hosts if you want. Other storage topoligies can easily coexist with vSAN, there is no limitation. A vSAN datastore is built from disk groups. Every disk group is a single capacity unit from a host and provides cache and data. You must have one flash disk per disk group and one or more capacity disks. There is a limit of five disk groups per host / node.

You need at least three vSAN hosts / nodes for production environments. Your data (for example a VM) is stored all across the hosts in the cluster. Three components are stored in total; two replicas and a whitness. If a host failure occurs the cluster needs the quorum to decide what to do with your data. Thats the reason why there are three components.

Install checklist

- HCL checked?

- disk controller in pass through?

- host cache and capacity disks?

- VMkernel marked as vSAN?

- Multicast on network level?

- Uplink or VLAN considerations?

- 1GB or 10GB network connection?

- Cluster of three nodes?

- Standard switches or distributed virtual switches?

Conclusion

Setting up vSAN is easy as pie. Meet the requirements and turn it on:

- setup vSAN networking

- enable vSAN on the cluster

- select automatic or manual disk claiming

- create disk groups if you set automatic disk claiming

And because it’s that easy, the official movie about setting up VMware Virtual SAN is only about three minutes long. There you go.

https://www.driftar.chyoutube.com/watch?v=1EDWKE93ivw